Privacy in crypto has always been a strangely polarizing “all or nothing” game. Depending on who you talk to, it’s either a philosophical battle cry, a regulatory red flag, or simply a feature request.

But recently, something has shifted. You can feel the urgency building, from institutions that want to move on-chain but don’t want to expose their customer data, and from users exhausted by breaches and surveillance. We’ve even seen a recent shift from policymakers, as evidenced by a recent privacy roundtable organized by the SEC.

I’ve worked in legal, fintech, and now crypto industries long enough to see how hard it is to reconcile privacy, trust, and innovation:

In Big Law, privacy meant negotiating limitations of liability and indemnification contract clauses.

In fintech, privacy often meant checking regulatory boxes — collecting vast amounts of data while trying to reduce friction and not scare customers.

In crypto, it has meant navigating a space that oscillates between extreme transparency and covert secrecy.

What excites me now — and why I joined o1Labs — is that zero knowledge tech finally breaks this binary. Zero knowledge proofs let us rethink privacy not as a choice between “show everything” or “show nothing,” but as something programmable, contextual, and verifiable.

This is what we call programmable disclosure.

Our Understanding of Privacy Is Evolving

The notion of privacy is woven into constitutions, legal systems, and cultural norms. As an example, consider the 4th amendment in the Bill of Rights to the US Constitution, which protects against unreasonable search and seizure. But every major technological shift forces us to renegotiate our definition of what privacy should look like.

In the era of blockchains, we accidentally created an environment where the default setting is permanent exposure. Every transfer, every investment, every payment lives on-chain forever. It’s traceable, linkable, and often tied back to a real identity faster than most people realize.

On the other hand, the tools built in response — like mixers, opaque protocols, fully hidden transactions — triggered understandable concern from regulators and institutions. They solved the confidentiality problem, but not the trust problem.

Neither extreme reflects how people actually live. Most of us don’t want to be completely invisible; we just don’t want our financial life posted like a public diary. Institutions don’t object to privacy; they object to systems they can’t audit. Regulators don’t want to peer into every user’s data; they want assurance that rules are being followed.

The real challenge isn’t privacy itself; it’s misalignment around what privacy should mean.

A Better Way To Think About Privacy

Programmable disclosure is my attempt to articulate a more practical and universal framing.

Instead of forcing developers, users, and businesses to choose between transparency and secrecy, programmable disclosure allows you to reveal only what’s necessary for a given interaction — nothing more, nothing less.

Zero knowledge proofs make this possible. They let you prove a fact without revealing the underlying data that supports it:

You can prove you’re over 18 without sharing your birthdate.

You can prove funds came from a legitimate source without exposing your income.

You can prove eligibility for a clinical trial without handing over your full medical history.

You can show supply-chain compliance without giving competitors a map of your vendors.

It’s privacy that still feels honest.

It’s compliance that doesn’t require surveillance.

It’s trust without overexposure.

I spoke about this shift — and why it matters for the future of verifiable computing — during my recent talk at Pragma Buenos Aires. You can watch the recording to dive deeper into the ideas behind programmable disclosure and the questions it raises for policy, compliance, and everyday product design.

Why Mina Fits Naturally Into This Moment

This is exactly the environment where programmable disclosure shines — and why Mina’s architecture resonates so strongly.

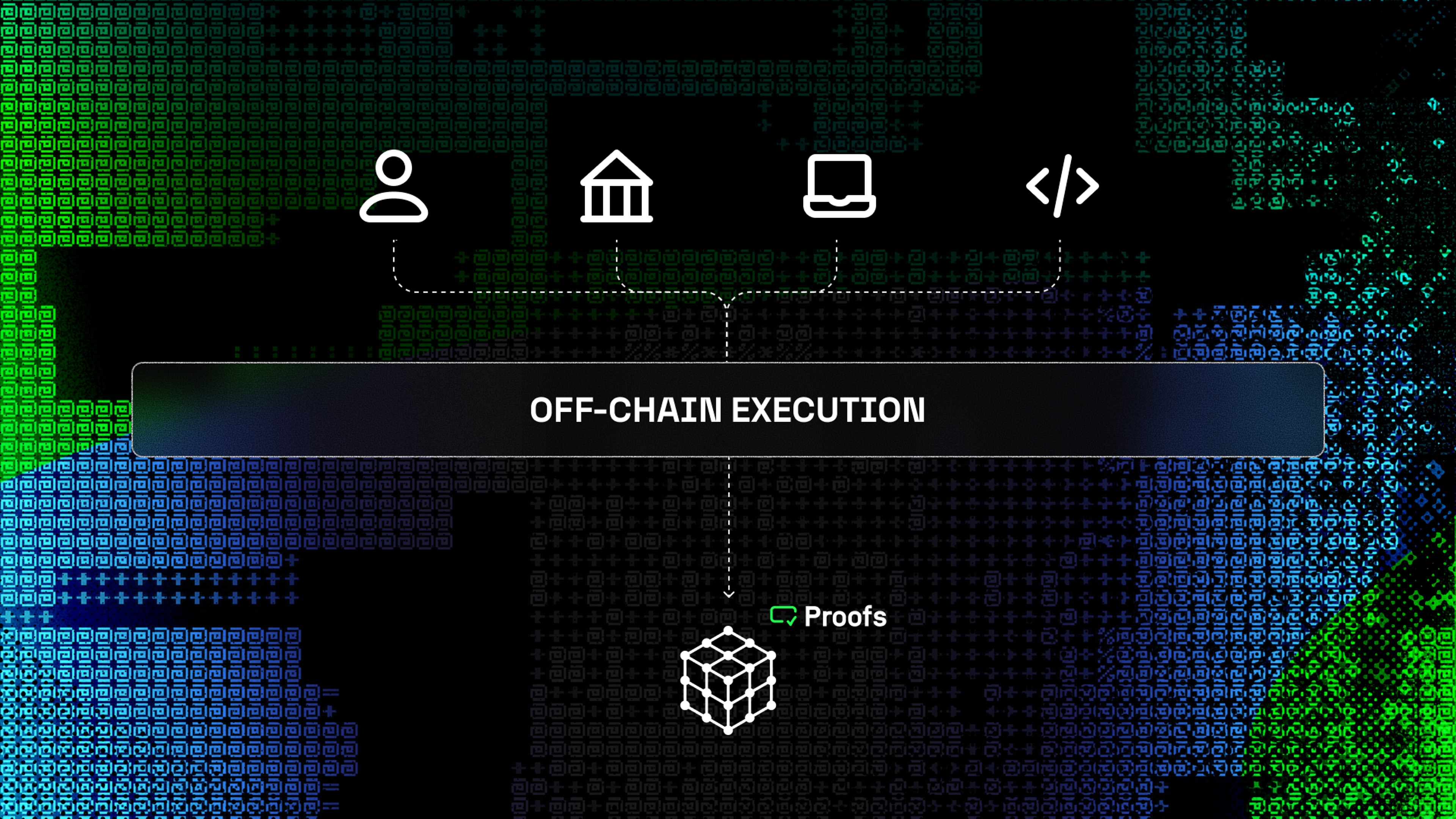

Mina didn’t tack privacy on. It wasn’t added as a feature after the fact. The protocol was designed from day one around the idea that blockchains should verify proofs, not store data. Off-chain execution, proof submission, and recursion aren’t just technical choices — they’re privacy-by-design principles.

Because only proofs go on-chain, developers can decide precisely what is revealed and under what conditions. And because Mina is succinct and expressive, this doesn’t come with prohibitive cost or complexity.

What this means in practice is powerful:

Users keep their data.

Institutions get verifiable guarantees.

Applications avoid inheriting massive compliance risk.

Developers can compose rich, private logic without heavy infrastructure.

As industries embrace verifiable AI, privacy-preserving identity, and on-chain finance with global compliance implications, control over disclosure becomes the differentiator. It’s the capability that makes real-world adoption possible.

This is where Mina should lead — as the foundation for applications that need privacy and verifiability to exist.

Looking Ahead

Programmable disclosure isn’t a slogan. It’s a shift in how we design systems, how we build applications, and how we communicate about privacy. It requires continued progress in proof systems and applications, better education for policymakers, and product experiences that make the benefits intuitive.

But we’re no longer waiting on the core technology. It’s here.

Now comes the work of applying it in ways that solve real problems for real people.

At o1Labs, we’re focused on helping developers build applications that feel private, trustworthy, and aligned with the world we actually live in — not the one we inherited from early blockchain design decisions.

If you’re exploring applications that need confidentiality, auditability, or policy compliance without data exposure, I’d love to hear from you. Because the future of privacy isn’t about hiding. It’s about revealing only what’s necessary — and finally giving control back to the people who should have had it all along.